A few months ago, Humanity ran an experiment that should have been a wake-up call for anyone who still believes they can “just tell” when something online is fake. Using only widely available AI tools, we created Tinder profiles featuring entirely AI-generated people. These profiles bypassed verification systems and convinced more than 40 real individuals to agree to dates — at the same time, at the same location.

Here's what went down:

This wasn't down to sophisticated espionage or custom-built machine learning lab. Just consumer-grade AI tools and an understanding of how people behave online. We ran another experiment, on a much smaller scale within our community, to answer another burning question:

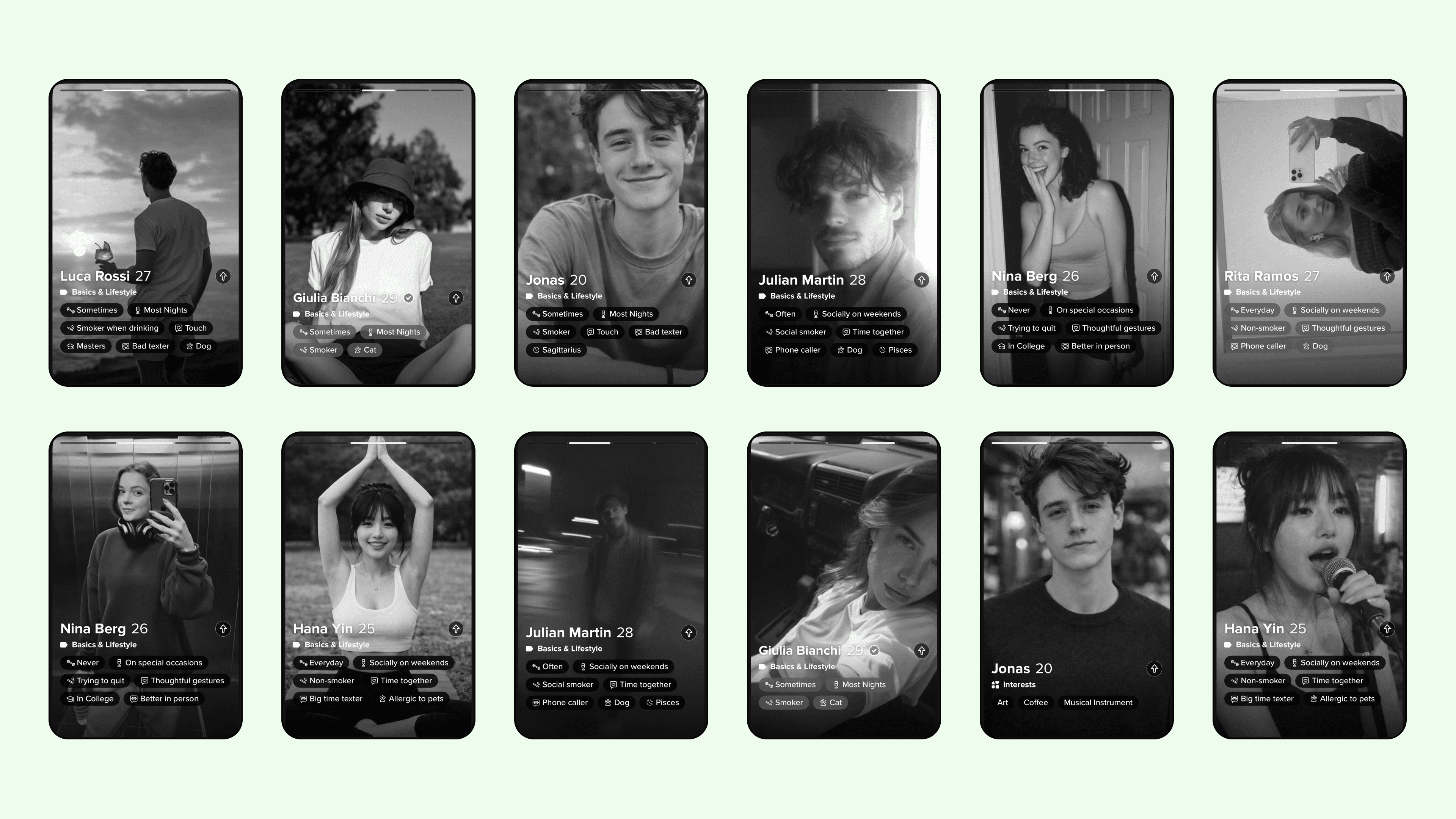

Could people spot the real human when they were explicitly told that fakes were present?

The Setup

The premise was simple. In each round, our community was shown a set of dating-profile-style photos. Among them was one real human. The rest were AI-generated faces created using tools that anyone with an internet connection could access.

Participants were told to identify the real person.

Across three rounds, we collected 851 total votes. What happened next surprised even us.

The Results

In the first round, only 23% of participants correctly identified the real human. In the third round, that number dropped even further to 19%.

Let that sink in. In two out of three rounds, fewer than one in four people could correctly distinguish a real human face from AI-generated ones, even when they knew fakes were present.

The second round performed much better, with 64% accuracy. It stood out immediately as an outlier. But even there, more than one-third of voters still failed. When aggregated across all rounds, overall accuracy landed between 35% and 36%, depending on whether you average percentages or weight by total votes. Only about one in three people could successfully identify the real human. And that’s in a setting where everyone knew it was a test.

There’s a common belief that AI-generated faces are detectable if you “look closely.” People talk about distorted hands, strange backgrounds, asymmetrical earrings, uncanny eyes. That advice is already outdated.

Modern generative models have dramatically improved facial symmetry, lighting realism, skin texture, and lens simulation. They produce faces that feel natural not because they are perfect, but because they replicate the statistical imperfections we associate with humanity.

The key psychological shift is this: humans evolved to trust faces. Facial recognition is one of our most refined cognitive abilities. We read micro-expressions, detect emotional cues, and infer trustworthiness in milliseconds. AI now manufactures those signals at scale. And as our experiment suggests, when we are forced to rely purely on visual judgment, we do not perform well.

From Dating Profiles to Digital Identity

The original Tinder experiment demonstrated that AI personas can pass verification and coordinate real-world outcomes. Our second experiment suggests something even more fundamental: visual authenticity itself is eroding. If people cannot reliably distinguish between real and synthetic faces, even when explicitly instructed to try, then identity verification cannot depend on intuition.

This extends beyond dating apps. It touches hiring processes, social engineering attacks, political misinformation, influencer culture, and financial scams. Anywhere identity is inferred from a face, the risk multiplies.

We are entering a phase where the question is no longer, “Is this fake?”

It’s, “What systems do we build assuming that fakes are indistinguishable?”

A generation ago, photographic evidence carried implicit credibility. Seeing was believing. Today, seeing is a coin flip. Our small Discord experiment is not a peer-reviewed academic study. It’s not a nationally representative sample. But it reflects something observable in broader society: AI-generated humans are good enough to defeat casual inspection.

And casual inspection is what most online interactions rely on. When roughly two out of three attempts to identify a real human fail, the baseline assumption about visual trustworthiness must change. The internet has always allowed people to mask identity. What’s different now is that the mask looks perfectly human. And increasingly, we can’t tell the difference.

For Developers

Looking to implement secure, privacy-preserving identity verification for your organization? Our enterprise solutions can help you eliminate fraud and build customer trust.

For Enterprises

Ready to integrate Humanity Protocol into your applications? Our developer tools make it easy to add human verification with just a few lines of code.